Some online video games require less than 50 ms. An acceptable average latency is 100 milliseconds. How do you measure the performance of a network?Īs a rule, the lower the latency, the higher the speed.

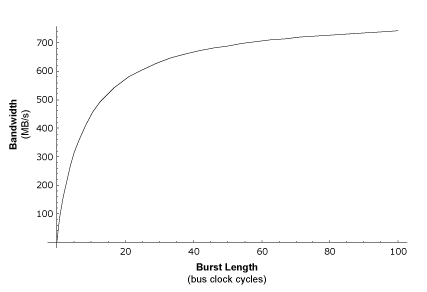

How long would it take them to get from point A to B if they are on a 5-lane highway (low latency, high bandwidth) compared to the same number of cars making the same trip on only two lanes (low latency, small bandwidth)? Latency has to do with the vehicles on the highway-how fast a car moves from one end to the other.Īn example to measure latency would be five cars on a highway. Bandwidth has to do with how narrow the highway is. The best way to explain the difference is by using a highway as an example. Ironically, bandwidth is not a measure of speed, although everyone refers to it as if it were. We know these are confusing terms, but we will try to explain them simply and clearly: latency is a way of measuring connection speed. In this case, 50 Mbps is the maximum throughput that we could get. The truth is that the 50 Mbps connection has little to do with speed, and more to do with the amount of data it can receive per second, that is, the bandwidth. These 2 terms are sometimes mistakenly confused and equated with 'speed,' the speed at which we can upload and download files service providers advertise that their internet connections have speeds of 50 Mbps (Megabits per second). Latency is strongly linked to connection speed and bandwidth of a network. The importance of connection speed and bandwidth

In other words, the lower the number of milliseconds, the latency, and the network will behave more efficiently therefore, the user will have a better experience. Latency is generally measured in milliseconds (ms). In the field of human interaction with computer systems, the perceptible connection latency has a strong effect on user satisfaction and usability. With regard to latency, let's start by defining it: latency is a network term to describe the total time it takes for a data (information) packet to travel from a source node to a different destination node.Įvery physical system with separation (distance) between source and destination will experience some kind of latency. Latency: A concept strongly linked to connection speed and bandwidth of a network.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed